The Agentic Boundary Is the Gray Zone Between Insight and Action

The real boundary in agentic AI is the gray zone where candidate actions outpace human decision capacity. Most discussions about agentic AI still assume a simple story: if autonomous systems can do more of the work people used to do, organizations should become dramatically faster and leaner. So far, they rarely do.

Most discussions about agentic AI still assume a simple story: if autonomous systems can do more of the work people used to do, organizations should become dramatically faster and leaner.

So far, they rarely do.

In practice, many teams find that agentic systems remove labor from the middle of a workflow while making the downstream parts more strained.

Drafting gets faster → Monitoring gets faster → Analysis gets faster → Triage gets faster → then the organization hits a new wall: someone still has to decide what matters, whether the evidence is good enough, who should act, what needs escalation, what can be trusted, and what should happen next = the bottleneck moved downstream instead of disappearing.

That is the real organizational challenge behind the current wave of agentic and autonomous systems. The problem is that many organizations have not redesigned the process that has to turn output into commitment.

When that happens, AI does not create compounding leverage. It creates or shifts bottlenecks blocking the expected ROI.

The New Constraint Is Decision Velocity

The first-order benefit of agentic systems is real: they compress routine execution. Work that once required repeated manual effort can now happen continuously in the background: scanning, summarizing, comparing, flagging, drafting, monitoring, classifying.

That changes the economics of the workflow. But it decouples the final judgment from the preceding work.

In many organizations, consultants, analysts, or specialist teams used to act as a compression layer. They did work, filtered the space, narrowed down the ambiguity and ultimately surfaced the few things leadership had to decide on. In effect, they controlled decision velocity by deciding what reached the decision-maker in the first place.

When agentic systems take over much of that flow, they can increase the number of candidate moves far faster than the downstream human system can sort, decide through, and commit on. Instead of a few filtered choices, the organization may now face a much larger set of plausible actions, tests, warnings, and recommendations. The remaining human work becomes more concentrated and more consequential. On a personal note – it’s also decidedly less fun. There are fewer but higher-stakes approval checkpoints now:

- Is this important enough to interrupt someone with?

- Is the evidence good enough to support a real decision?

- Is this a recommendation, a warning, or just noise?

- Who owns the next move?

- Does this need escalation?

- Can this be approved, sent, or acted on safely?

This is why organizations can feel simultaneously faster and more congested. The system produces more, the organization can consider more, but it does not necessarily decide faster.

That gap matters more than most AI deployment plans acknowledge.

You can think of it as an "agentic absorption gap": the distance between how fast a system can generate candidate actions/insights and how fast the surrounding organization can verify, prioritize, commit, and act on them. When that gap gets wider, option volume rises faster than decision velocity.

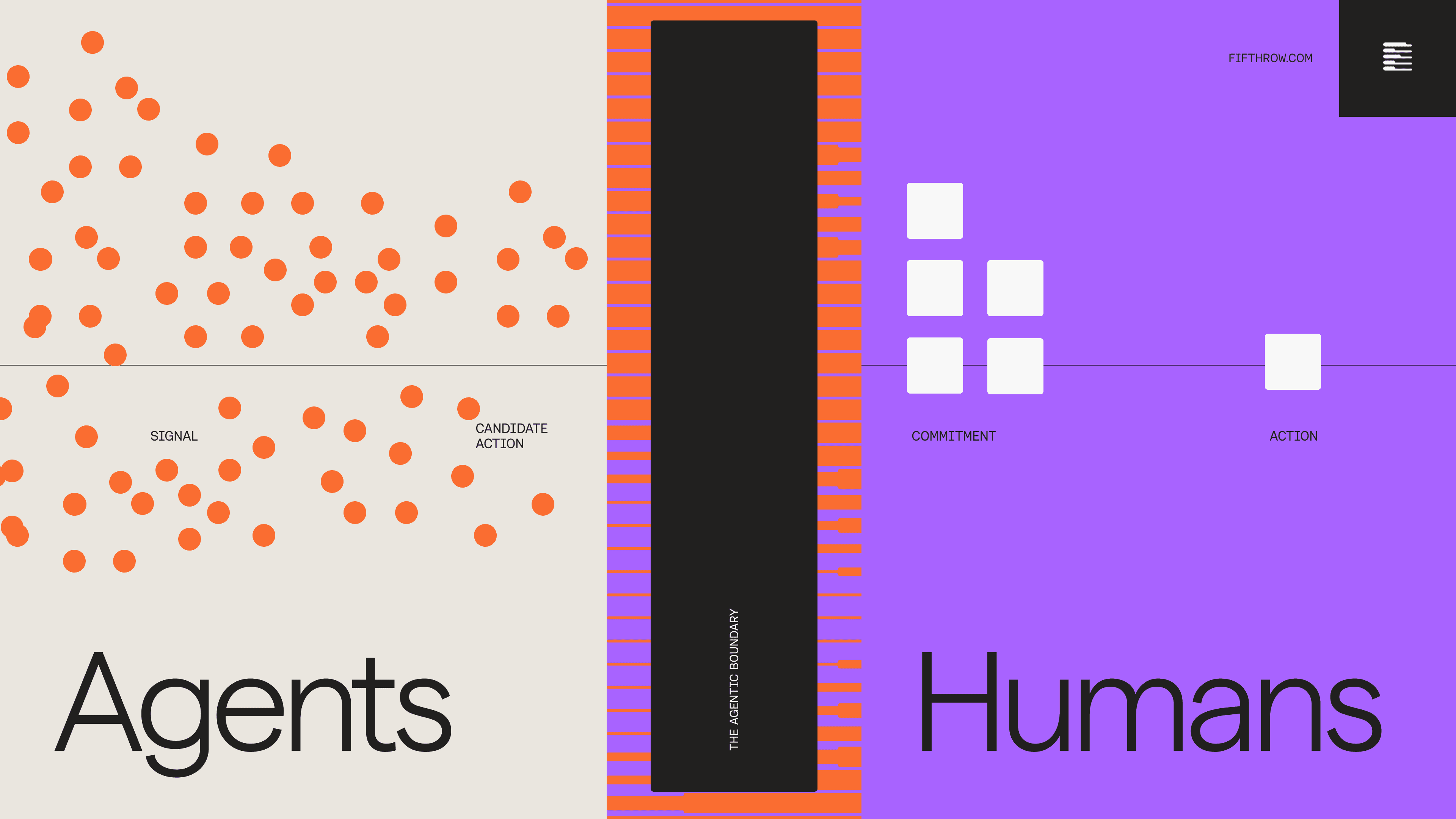

The Agentic Boundary

In reality, most organizations encounter a gray zone where hand-off between high velocity high-effort + low-leverage agentic systems meet high-leverage decisions/commitments made by humans.

It is better to think of the boundary as a fuzzy band around commitment, almost like error bars around the question, "Is this ready to become a real decision?"

Inside that band, four things are usually still unstable:

- ownership is fuzzy

- evidence is directionally useful but not yet strong enough to act on without debate

- escalation rights are unclear

- manual rescue is quietly carrying more of the workflow than anyone admits “because AI”

That is why the boundary often feels hard to define in the moment. The output is not obviously useless, but it is not cleanly decision-ready either. It sits in a gray zone between candidate action and organizational commitment, where teams can sense relevance, but still do not know whether they should trust it, prioritize it, escalate it, or hold it.

In other words, the boundary is not where the AI stops. It is where organizational decision certainty starts to thin out and cycle time starts to stretch.

Why More Output Often Creates More Drag

The common response to this problem is to assume the AI is not good enough yet. Sometimes that is true. But in many cases the real issue is elsewhere.

Most enterprise workflows already contain hidden friction:

- ownership is ambiguous

- evidence standards are inconsistent

- handoffs depend on informal context

- meetings, reviews, and approvals run on periodic cadence

- nobody agrees on what deserves escalation

- important decisions still require political alignment, not just information

When a new agentic layer starts producing more work more quickly, those weaknesses become visible. The organization receives more candidate actions without having made the downstream decision logic explicit.

That is why the failure mode often looks strange from the outside. Teams say they have more intelligence than before, but decisions do not seem much faster. They say automation is running, but people still feel overloaded. They say the system is productive, but the business impact is hard to see. In reality, the bottleneck has shifted from producing options to choosing among them.

The issue is shifted from signal acquisition to how signal becomes action.

This is also why the most important operating question is not, "Where can we deploy more agents?" It is, "What process will filter, prioritize, and commit on what the agents produce?"

How To Spot The Boundary

Once you stop treating the boundary as an abstract concept, it becomes easier to see. It shows up in recurring operational signals.

The strongest signal is that cycle time from signal to decision is not falling, even though upstream production is. The system gets faster, but the downstream decision queue does not.

Another signal is that a decision-maker, operator, or middle layer becomes the new queue. Outputs are waiting for human prioritization. Candidate actions pile up faster than someone can sort them into what actually matters now.

Another signal is manual triage becoming normal. Someone keeps filtering, compressing, ranking, or translating large amounts of output into the few items leadership can actually decide on. When that human filtering layer becomes standard, the boundary is already present.

A third signal is repeated re-interpretation. The same output is summarized again in email, again in a meeting, and again in a follow-up document because the original artifact did not carry enough judgment to travel on its own.

There are also signals of action-capacity mismatch:

- more candidate actions are surfaced than the organization can realistically commit to

- the output seems important, but nobody clearly owns the next move

- confidence sounds high, but the evidence base is thin or contradictory

- the organization keeps debating urgency because escalation rules were never made explicit

- false positives are creating avoidable review work

- teams keep asking some version of, "What actually matters now?"

Readership still matters, but mostly as a subordinate signal. The deeper issue is that the system may be producing valuable material faster than the organization can turn it into decisions.

A Useful Example: When Agentic Volume Outruns Human Commitment

One place this shows up clearly is in idea generation, validation, and market testing workflows.

In the older model, consultants or internal strategy teams often acted as a hard filter. They did the exploration, ran the analysis, and returned with a small number of options. Leadership never had to sort through the full space. They only had to choose among the most important items that had already been compressed for them.

In the agentic model, that compression layer often weakens. A good workflow can now surface 10x or 20x more candidate ideas, experiments, warnings, or next steps than before. That sounds like a pure gain until it reaches the same downstream humans who still have to decide what gets funded, prioritized, approved, escalated, or acted on.

Take a simple innovation example. If a team could previously market-test five to ten ideas per year, and now a system can help surface or validate one hundred, the raw capability is obviously better. But how many of those one hundred can the organization really act on if the downstream decision process has not changed?

That is why many organizations quietly recreate a human consultant layer after the fact. Someone has to filter the flood, compress it into the few things that matter, and protect decision-makers from drowning in plausible-but-uncommitted options.

This is not a sign that the system has failed. It is a sign that the organization has shifted where judgment still lives but in most cases has not planned for it and has not been explicit about it – both in process redesign and with it’s people. This obviously creates friction and frustration.

If that filtering layer remains an ad hoc rescue function, the organization never gets the full leverage of the system. The same person or team becomes the new bottleneck. If instead the layer is designed explicitly, with clear triage rules, thresholds, and escalation logic, the workflow can improve over time.

Another Example: Speed Gains That Move the Constraint Elsewhere

A useful case (soon to be published by a former CTO of RTL – Giovanni Piccirilli) from outside the usual AI hype cycle comes from RTL Technology's moonshot work on GenAI delivery. The interesting lesson is not that AI accelerated coding – plenty of teams can show that – it's that meaningful gains appeared only after the operating model changed with it.

RTL simplified the work around the technology: smaller end-to-end teams, fewer handovers, protected capacity, tighter feedback loops, faster decisions. In other words, the AI was useful because the surrounding process was redesigned to convert new speed into faster decisions... And then the bottleneck moved again.

Once the internal loop sped up, the main drag shifted to external systems, integrations, and surrounding dependencies. That is exactly the point. AI does not magically remove constraint from a system. It changes where the constraint sits.

Organizations that expect frictionless acceleration are often disappointed. Organizations that expect constraint migration are more likely to design for the next problem before it stalls them.

The Management Layer Changes Too

This is not only a workflow problem. It is also a management problem.

Andrew Chen (GP @ a16z) recently made a useful observation about product management in an agentic world: the role starts to split between human coordination and agent orchestration. One side still deals with stakeholders, legitimacy, prioritization, risk, and direction. The other increasingly deals with prompts, evaluations, monitoring, failure patterns, workflows, and system behavior.

That split matters because it explains why old operating rhythms stop fitting.

When systems act continuously, management cannot rely only on periodic narrative rituals. Reviews shift toward anomaly triage, simulation, monitoring, and intervention. Teams need better ways to inspect what changed, what failed, what drifted, and what should be escalated.

But the slower human layer does not disappear. Strategy still has to be set. Risk still has to be judged. Trade-offs still have to be owned. Trust still has to be earned across functions. That means organizations now have two speeds to manage at once: the fast loop of system execution and the slower loop of human legitimacy.

If they optimize only for the first one, the second becomes the hidden bottleneck.

Levels Of Boundary Problems

Not all boundary problems are equal. Treating them as one generic category leads teams either to overreact or to normalize issues that should trigger redesign.

A simple ladder is more useful.

Level 1: Option overload

At this level, the main issue is that too many candidate actions are being generated relative to what the organization can realistically pay attention to.

Typical signs:

- too many candidate actions relative to human attention

- too many ideas, tests, or alerts reaching the same downstream people

- recurring outputs that feel busy but not important

- more possibilities than the team can responsibly sort through

The fix here is usually better filtering and routing, not heavier governance.

Level 2: Triage bottleneck

Here the outputs are often useful, but humans still have to manually filter, compress, rank, or rewrite them before decisions can happen.

Typical signs:

- the same operator keeps acting as a filtering layer

- workflows depend on manual compression before leadership can engage

- outputs are acceptable only after human condensation

- candidate actions are waiting for someone to decide what is worth deciding

This is the level where many teams stall. The system is promising enough to keep, but not trustworthy enough to scale casually.

The fix here is usually explicit triage design: install a first-pass filtering layer, define what gets compressed for whom, and make sure humans are ranking a narrower decision set instead of reprocessing raw abundance.

Level 3: Decision ownership gap

At this level, the issue is no longer just output quality. The outputs are good enough to matter, but nobody clearly owns the next decision, commitment, approval, or escalation.

Typical signs:

- multiple teams assume another team will act

- recommendations circulate without a named decision owner

- meetings spend time deciding who should decide

- the organization keeps re-litigating whether the output is decision-ready

This is where the boundary becomes political as well as operational.

The fix here is usually clearer decision ownership: define who owns each action class, who has authority to commit, and what path moves a candidate from recommendation to real organizational decision.

Level 4: Governance choke point

At the highest level, the workflow can no longer be managed informally. The combination of impact, risk, or external consequence means escalation rights, evidence thresholds, and approval authority have to be explicit.

Typical signs:

- unclear approval authority for high-stakes action

- material compliance, legal, brand, or customer risk

- no shared view on what qualifies for interruption or escalation

- outputs are influential enough that ad hoc triage is no longer defensible

At this point, the issue is not "should we add another checker?" It is whether the organization has designed a credible operating model for the function at all.

The fix here is explicit governance: set evidence thresholds, escalation rules, approval authority, and control points so high-stakes actions stop depending on informal judgment or ad hoc coordination.

What Organizations Should Redesign

The practical answer is not to add more checks everywhere. It is to redesign the downstream action pipeline so candidate abundance does not swamp human decision capacity.

Organizations adopting agentic systems should actively do five things during implementation.

1. Install a first-pass filtering layer

Not every output deserves the same destination and not every candidate should reach a decision-maker. Some things should remain memory. Some should be held for batch review. Some should become a lightweight nudge. Some should go to a team-level summary. Some should trigger escalation. If everything becomes an alert, a report, or an approval task, the system will flood the organization.

2. Define ownership and decision rights by action class

If an agent surfaces a risk, recommends an action, or drafts a response, who actually owns the next move? If that answer is fuzzy, AI will increase ambiguity faster than it increases speed.

3. Separate interesting from decision-ready

Organizations need explicit standards for what is strong enough to act on. Otherwise people spend their time re-litigating confidence on every output, which turns review into an expensive improvisation layer. The point is not to suppress exploration. It is to prevent exploratory abundance from masquerading as decision readiness.

4. Create escalation thresholds by action type

What stays local, and what moves upward? What is the threshold for interrupting a leader, a specialist, or a control function? Many AI workflows fail because they generate outputs without a clear model for urgency.

5. Redesign cadence around decision throughput

Continuous systems cannot be absorbed well by purely periodic processes. If a workflow still depends on weekly or monthly interpretation rituals, teams need to redesign how ongoing monitoring, anomaly review, and decision moments fit together. The question is not only whether the system runs continuously. It is whether the organization can commit continuously at the points that matter.

None of this is glamorous. But it is where durable value is built.

The simplest way to think about implementation is:

- Level 1 problems need filtering and routing

- Level 2 problems need triage design and compression logic

- Level 3 problems need ownership and decision rights

- Level 4 problems need explicit escalation and governance

The common mistake is to jump straight from output generation to scale. A better pattern is to spot the boundary, classify the issue, and then redesign the right layer of the workflow before moving further.

What To Measure Instead of Raw Activity

One reason this problem lingers is that organizations use the wrong success metrics.

They measure:

- number of runs

- number of outputs

- number of drafts

- number of automations triggered

- number of hours saved in isolation

Those metrics matter, but they are incomplete. If the real bottleneck is downstream decision velocity, leaders also need to know:

- how many candidate actions are surfaced versus how many are actually committed

- whether cycle time from signal to decision is actually falling

- whether cycle time from decision to action is actually falling

- whether the same humans are becoming the new queue

- where manual filtering and compression are still happening

- how often teams escalate versus quietly work around weak outputs

- how much reviewer time is being burned on false positives

- whether the right roles are consuming the outputs that do reach them

- whether recommendations are followed through

- whether outputs are used in real decision contexts

- whether decision burden is concentrating in one person or team

- what percentage of outputs are actually read

This is one of the biggest shifts in the agentic era: value cannot be inferred from output volume alone. A productive system may still be a slow decision system.

There is also a commercial implication here. As BCG has argued in its work on pricing in the agentic era, software markets are gradually shifting from charging for access toward charging for completed work, differentiated capability, and measurable outcomes. That shift makes sense only if organizations can actually turn more candidate intelligence into faster, safer decisions. If downstream triage, ownership, and escalation remain unresolved, the promised outcome layer stays fragile. In that sense, operating-model redesign is not only an execution issue. It increasingly determines what kinds of AI value can be credibly packaged, measured, and trusted.

The Strategic Implication

The most important shift in the next few years may not be from humans doing work to agents doing work. It may be from implicit decision pipelines to explicit operating logic.

That logic includes:

- what enters the system

- what gets filtered out

- what counts as evidence

- who reviews what

- where humans are mandatory

- when an item escalates

- what becomes memory versus intervention

- how decisions are recorded and fed back into the system

The more this logic stays informal, political, or trapped in individual judgment, the more added intelligence turns into extra review burden instead of faster action.

Agentic systems do create real leverage. But only when organizations redesign the process around the new center of gravity they create. FifthRow helps with that redesign – Book a Demo with us